THANK YOU FOR SUBSCRIBING

Be first to read the latest tech news, Industry Leader's Insights, and CIO interviews of medium and large enterprises exclusively from CIO Advisor APAC

Featured Vendors (1 - 4 )

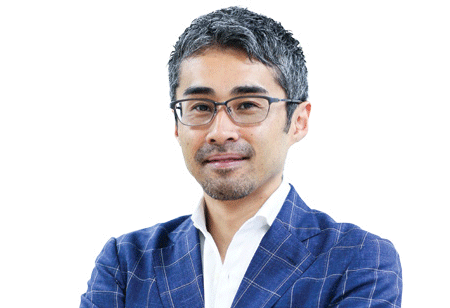

Jung Woo Park, CEO and Co-founder

Jung Woo Park, CEO and Co-founderMr. Park explains that this imbalance between training and production sub-markets exists due to the heavy and dexterous AI models' nature. To elaborate, developers generally use open source and complex AI frameworks such as TensorFlow, PyTorch, Caffe, and Keras, which could make the AI models unnecessarily convoluted, requiring higher computing power and processing speeds. Park's company—SoyNet—circumvents this hurdle by doubling down on the inference models, focusing solely on the reasoning part, rather than the learning part. Figuratively, the company steers away from the training-related processes by accelerating production speed, thereby eliminating redundancies that could otherwise lead to slower development times. "SoyNet has taken an innovative approach to solving bottlenecks within AI implementations. We have stripped away the AI training engine (once training has taken place), leaving only an AI inference engine. The result is a much lighter AI accelerator that boots up quickly and takes less memory," adds Mr. Park.

Accelerating AI with inference-only framework

SoyNet has developed an AI inference-only framework that breathes life into intelligent technologies by serving as a simple but highly versatile framework for AI models. The company's AI Inference-only framework adopts 8-bit quantization and post-training techniques to ensure that the developed models are faster and lighter, consuming lesser memory.

SoyNet can execute AI models three times faster than TensorFlow, all the while consuming just one-ninth of the memory

Upholding General-Purpose AI

SoyNet supports the general-purpose AI market extensively. The aforementioned 8-bits quantization technology scales down the conventionally used 32-bit floating-point integer, effectively reducing the memory consumption to carry out specific operations. As this merit also improves processing efficiency, the development of AI solutions for numerous use cases could be accelerated to a great extent, invariably improving developers' productivity, who can save both time and resources during the production phase.

SoyNet helps these developers reduce the time to market interval by providing expert advice and assistance concerning the solution's launch. "No matter how good your AI services are, their success largely depends on the timely launch," adds Mr. Park. In accordance with the CEO's words, the time to launch an AI solution into the market largely relies on the number of iterations necessary to debug the errors, which would effectively reduce the number of trials performed to achieve maximum AI optimization algorithms.

SoyNet's inference model functions as the rocket fuel to surpass the slower time to market thresholds of heavy, sluggish production platforms. Moreover, the company is working toward supporting migration protocols across platforms such as TensorFlow and PyTorch– a convenient function for the developers to transfer their models across different ecosystems. These functionalities undoubtedly open the doors to the next generation of inference models, which are efficient and easy to use with faster development times. SoyNet aspires to support AI developers globally, thereby providing them access to the necessary tools and expertise to create intuitive, utilitarian, and memory-friendly AI solutions. "We recently conducted a use-case-specific engagement with a customer with the use of our inference-only model, and they were able to reduce their hardware investments by one third. From a financial standpoint, that is a huge win for the customer on a larger scale," explains Mr. Park.

"We have stripped away the AI training engine (once training has taken place), leaving only an AI inference engine. The result is a much lighter AI accelerator that boots up quickly and takes less memory"

The inference-only accelerator, strengthened by the company's vision to simplify the production of AI, offers developers an effective route toward launching their AI-powered application promptly while saving a substantial amount of resources otherwise spent on memory-intensive hardware. SoyNet functions as the foundation for a faster and lighter AI solution, with the inference model, having an upper-handover the competitors in the marketplace.

SoyNet's inference model functions as the rocket fuel to surpass the slower time to market thresholds of heavy, sluggish production platforms. Moreover, the company is working toward supporting migration protocols across platforms such as TensorFlow and PyTorch– a convenient function for the developers to transfer their models across different ecosystems. These functionalities undoubtedly open the doors to the next generation of inference models, which are efficient and easy to use with faster development times. SoyNet aspires to support AI developers globally, thereby providing them access to the necessary tools and expertise to create intuitive, utilitarian, and memory-friendly AI solutions. "We recently conducted a use-case-specific engagement with a customer with the use of our inference-only model, and they were able to reduce their hardware investments by one third. From a financial standpoint, that is a huge win for the customer on a larger scale," explains Mr. Park.

"We have stripped away the AI training engine (once training has taken place), leaving only an AI inference engine. The result is a much lighter AI accelerator that boots up quickly and takes less memory"

The inference-only accelerator, strengthened by the company's vision to simplify the production of AI, offers developers an effective route toward launching their AI-powered application promptly while saving a substantial amount of resources otherwise spent on memory-intensive hardware. SoyNet functions as the foundation for a faster and lighter AI solution, with the inference model, having an upper-handover the competitors in the marketplace.

September 25, 2020

I agree We use cookies on this website to enhance your user experience. By clicking any link on this page you are giving your consent for us to set cookies. More info